Server-side sandboxing: An introduction

In this three-part series, our security engineering team shares practical tips for deploying and operating application sandboxing techniques. First up: evaluating the many sandboxing options, and how to think about the trade-offs between them.

Share Server-side sandboxing: An introduction

Artwork by Pete Gamlen.

Image processing, parsing, compression, and thumbnailing—creating a small thumbnail image of a large design file—are common image or data processing operations.

Often, the libraries that perform these operations are written in memory-unsafe languages like C++. Many popular libraries have a history of memory corruption vulnerabilities.

ImageTragick was a famous, critical security vulnerability in the commonly used ImageMagick library discovered in 2016. It allowed remote code execution in any services that ran ImageMagick on user-provided images server-side.

Buggy software is a fact of life, security threats are inevitable, and it’s nearly impossible to prevent all vulnerabilities. To power services for users, feature-rich apps rely on potentially risky software like image processing, parsing, compression, and thumbnailing libraries inside their infrastructure. Third parties frequently implement this software—which is seldom designed to handle hostile user input—in memory-unsafe languages. It’s no surprise that critical vulnerabilities like ImageTragick have appeared in the wild.

Fortunately, application-level sandboxing (also known as workload isolation) can be a powerful defense in these scenarios. In the past, sandboxing technologies were often expensive, immature, and operationally fickle, so only the most resourced security organizations could leverage them effectively at scale. This is one of the reasons why teams significantly underused sandboxing, despite its effectiveness as a pillar of a layered defense-in-depth architecture. Sandboxing remains an active area of research, and security teams have recently invested in developing more usable and stable solutions. Today, there is a surfeit of ways to virtualize, contain, and isolate processing.

With so many options, it can be daunting to choose the right combination of sandboxing techniques and make informed trade-offs for isolating your workloads. We’ve had to make these same trade-offs and experiment with deploying different primitives at Figma. In this article, we’ll explain what sandboxing is and why you might want to use it. We’ll cover a high-level overview of several common sandboxing primitives, including each of their inner workings and security properties, and provide a lightweight questionnaire to help you think about how to evaluate trade-offs when considering different sandboxing approaches.

Server-side sandboxing, explained

Some critical features in modern SaaS applications introduce inherent risks that security teams have to mitigate. For example, at Figma, we create tools for product teams to brainstorm, create, and ship designs together. On the client side, we rely on various browser sandboxing technologies, like WebAssembly, and techniques to provide a secure and responsive editing experience. But on the server side, we rely on our own libraries to provide the design features our users need, such as RenderServer, a stripped-down server-side version of the Figma editor written in C++. We also use third-party libraries to process user-generated graphical data, which could contain malicious input.

At Figma, we don’t want to run jobs on potentially malicious input directly inside our infrastructure. If we did, one serious bug could allow that job to access user data from other jobs, make requests to other production services, or move laterally and compromise additional components of production. One solution would be to rewrite all of our unsafe code in a memory-safe language and leverage program analysis techniques to prove its correctness and security properties. But as with many other complex real-world systems, this would require pulling resources away from other critical security projects and in turn present a number of other trade-offs. (Even then, there’s still a risk that we might inadvertently introduce a security bug anyway, because no methods are foolproof. We’re all human.)

It’s expensive and unrealistic to rely entirely on preventing security vulnerabilities. Instead, we also use sandboxing to mitigate the impact of those bugs when they do come up. While there are many different strategies we could use, sandboxing also allows us to minimize and better manage the external interfaces and resources that a compromised workload can access, effectively containing an attack to the affected system.

Common sandboxing approaches

Seccomp, short for secure computing mode, can restrict the system calls a program is allowed to make.

There are several approaches to building a security sandbox: virtual machines (VMs), containers, and secure computing mode (seccomp). These solutions get complicated quickly, so we’re sharing a brief overview here before we dive into the pros and cons of VMs With so many sandboxing options, it’s daunting to choose the right one. Here, we dive into the virtual machine (VM) security model for sandboxing, including the engineering trade-offs to consider and how we use them at Figma to achieve security isolation. Containers and secure computing mode (seccomp) are sandboxing primitives that offer a lighter weight alternative to virtual machines (VMs). Here we cover the differences between them, and how we use both at Figma to achieve security isolation.

Server-side sandboxing: Virtual machines

Server-side sandboxing: Containers and seccomp

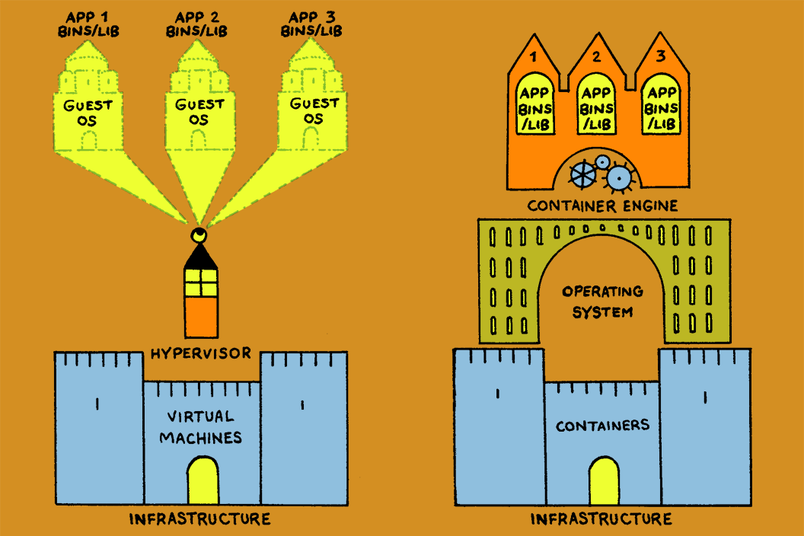

Virtual machines

Firecracker, Xen, and KVM are all examples of commonly used hypervisor technologies.

A VM is a guest virtual computer that behaves like a real physical computer with its own memory, disk, and CPU. VMs have been around for decades, and today, there are various types of virtualization and different approaches to implementation. Typically, the interface between the guest VM and the host system is a hypervisor which manages execution of the guest VM and provides an interface for the guest to access host hardware resources. At Figma, we’ve primarily used Firecracker as an isolation primitive via our use of AWS Lambdas.

Containers

nsjail is a commandline tool that leverages Linux namespaces, capabilities, filesystem restrictions, cgroups, resource limits, and seccomp to achieve isolation.

Unlike VMs, container isolation happens at the operating system (OS) layer and typically relies on the host’s OS features for isolation, such as kernel features like namespaces, cgroups, or privilege dropping. Depending on the implementation, containers tend to require less performance overhead compared to VMs because they can call the host OS’s system calls (syscalls) directly rather than having to execute in the context of a full hypervisor. At Figma, in use cases where container-level security isolation is appropriate, we primarily use nsjail.

Seccomp

A seccomp-driven approach recognizes that many isolatable programs are doing pure computation, and thus do not need dynamic access to any disk or network at all. This approach significantly reduces the set of features needed and associated performance overhead to achieve the desired level of isolation. To do this safely at Figma, we restrict the system calls the program is allowed to make using the seccomp feature built into the kernel, producing an allowlist of permitted operations by the underlying program. Ideally, this limits the program to simply allocate memory, produce its output, and exit. We built this directly using libseccomp into portions of the Figma codebase.

Finding the best fit

Sandboxing is not without its challenges. The options outlined above all make different trade-offs between security, ease of development and maintenance, and runtime performance. For instance, while a seccomp-driven approach offers generally strong security properties and minimal runtime overhead, it may involve substantial initial development cost. Meanwhile, a container-driven approach has more potential security pitfalls and often reduced runtime performance, but is likely far easier to ship.

Below we’ve outlined the questions that will help you match your team’s needs to the right sandboxing solution. Once you have a sense of the different high-level trade-offs, our in-depth discussions on VMs With so many sandboxing options, it’s daunting to choose the right one. Here, we dive into the virtual machine (VM) security model for sandboxing, including the engineering trade-offs to consider and how we use them at Figma to achieve security isolation. Containers and secure computing mode (seccomp) are sandboxing primitives that offer a lighter weight alternative to virtual machines (VMs). Here we cover the differences between them, and how we use both at Figma to achieve security isolation.

Server-side sandboxing: Virtual machines

Server-side sandboxing: Containers and seccomp

Environment

- Does your workload need to run inside a specific OS or other specialized environment?

Security and performance

- How strong of a sandbox do you need?

- How much attack surface are you willing to accept?

- What level of isolation do you need? (e.g. Do you need to isolate each job? Or only between users? Or only between projects, teams, or organizations among your users?)

- Are you willing to accept some trade-offs between level of isolation and performance, and developmental complexity?

- How much latency can you tolerate?

- What is your budget for compute and other infrastructure costs?

Development costs and friction

- Do you have the expertise to modify your workload code to craft a tailor-made sandboxing solution, or do you need an off-the-shelf, drop-in solution? Is something between these two extremes acceptable?

- How much development cost and complexity are you willing to take on?

Maintenance and operational overhead

- Is your workload code under active development?

- Are you willing to run your own service, and do you have the resources to do it?

- Will another team own this system, and are they willing to bear the operational overhead and additional complexity?

- What level of debugging access will engineers require?

Isolating dangerous or insecure workloads is a key tool for security teams. At Figma, sandboxing allows us to build valuable new product features for users while minimizing both risk to our infrastructure and the cost of each new feature. For a more in-depth look at sandboxing primitives, check out our guide to VMs With so many sandboxing options, it’s daunting to choose the right one. Here, we dive into the virtual machine (VM) security model for sandboxing, including the engineering trade-offs to consider and how we use them at Figma to achieve security isolation. Containers and secure computing mode (seccomp) are sandboxing primitives that offer a lighter weight alternative to virtual machines (VMs). Here we cover the differences between them, and how we use both at Figma to achieve security isolation.

Server-side sandboxing: Virtual machines

Server-side sandboxing: Containers and seccomp

Scaling our security is essential for Figma’s success. If you’re interested in working on projects like this, we’re hiring!