A/B test

What is A/B testing?

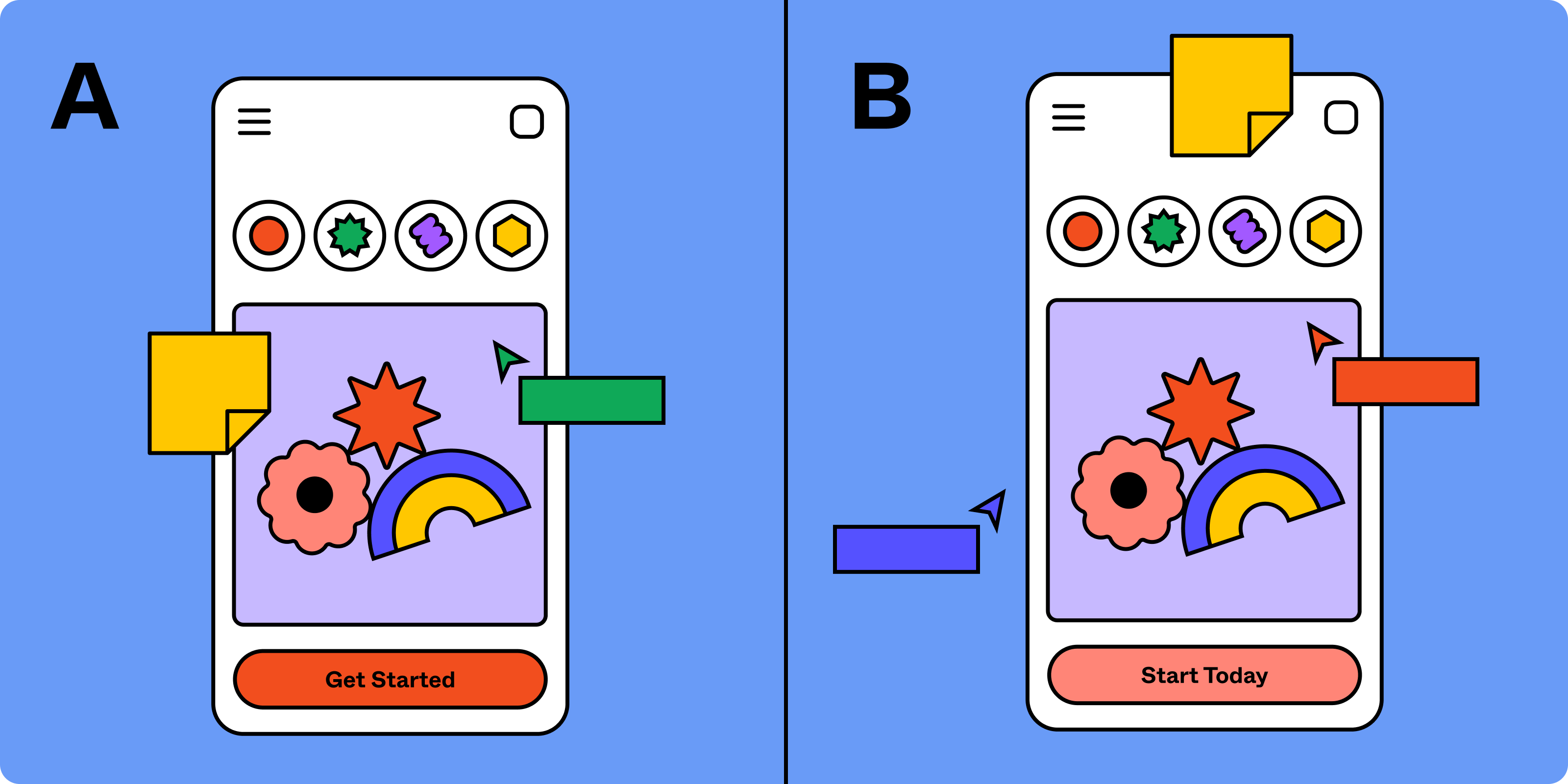

At its core, A/B testing (also known as split testing or bucket testing) is a method that involves comparing two versions of a webpage, an app feature, or any other user-facing element to determine which one performs better. This is achieved by showing the two variants, A (the control or baseline) and B (the variation), to similar visitors at the same time. The one that gives a better conversion rate, wins!

A/B testing is a part of the broader field of conversion rate optimization (CRO), which aims to increase the percentage of customers who complete the desired action. Through A/B testing, you can make data-driven decisions, removing guesswork or personal biases, and ensuring that every change you make contributes positively to your marketing strategies.

How A/B testing works

Here are the essential steps for running an effective A/B test to enhance your customer experience:

1. Determine your goal

Before you start A/B testing, first figure out what you want to achieve. Use user research to help set clear goals, like increasing conversion rates from an email campaign or making your software easier to use. This way, you can make sure you're testing the right variables, instead of just guessing. By combining research with testing, you're more likely to solve real problems and make meaningful improvements.

2. Create a hypothesis

Once you've identified your goal, you'll need to develop hypotheses that explore how varying homepages or digital marketing strategies could help users reach this goal more easily. While you can use a framework like "If I change X, it will have Y effect" to shape your hypothesis, keep in mind that a strong hypothesis should focus on three key aspects: the presumed problem, the proposed solution, and the anticipated result. For instance, if your goal is to increase click-through rates (CTR) on a landing page, your hypothesis might be: "Changing the call-to-action (CTA) button color will increase CTRs." This hypothesis identifies the problem (low CTR), proposes a solution (changing CTA button color), and anticipates a result (higher CTR).

3. Develop two versions

After coming up with your hypothesis, it's time to make two different variations of the webpage or email you are going to test. Once your test runs use a tracking tool like Google Analytics to watch what happens to user behavior on both versions in real time. For e-commerce websites be sure to look at fonts, colors, where your CTA button goes, and so on. For marketing campaigns, think about the email subject line, the way you lay out your content, when you send them out, and who you send them to. Always make sure the sample size from your target audience is big enough to trust the results.

4. Analyze test data

After your test is done and you've gathered enough data, it's time to dig into the a/b test results. You're aiming to see which version—A or B—scored better in areas like clicks or conversions per visitor. To reach a valid conclusion, mix qualitative metrics like user feedback from surveys with quantitative ones, such as revenue per visit (RPV), bounce rate, or average time spent on page (ATOP). Make sure to check for statistical significance to confirm that the results aren't just due to chance, but actually show which option was more effective for your goals.

What are the different types of A/B tests?

A/B testing tools can be classified into three types: A/B testing, Split URL testing, and Multivariate testing. Let's delve into each type:

1. A/B testing

A/B testing, as described earlier, involves comparing two versions of a web page or user-facing element to see which functionality performs better. You show the control and the variant to different user segments at the same time and analyze their interactions to determine the winner.

2. Split URL testing

In Split URL testing, you create an entirely new version of a web page and host it on a different URL. You then divide your traffic between the original page (the control) and the new page (the variant) and compare their performances.

3. Multivariate Testing (MVT)

Multivariate testing takes A/B testing a step further by testing multiple variables simultaneously. In an MVT, you create different versions of multiple elements on a page and test all possible combinations to find the one that shows the greatest statistically significant results.

Sign up for Figma today

Ready to optimize your design with data-driven decisions? Design your A/B test using Figma today!

Related terms

Looking for more info? Check out these related terms.

A. Customer-Journey, noun:

The customer journey is the complete sum of experiences that customers go through when interacting with a brand or product. From initial awareness to conversion, understanding the journey can help you create more … Read the full definition »

B. Usability, noun:

Usability refers to how easy it is for users to achieve desired tasks within a particular system or interface. By focusing on usability, designers can improve user satisfaction and … Read the full definition »

C. UI/UX-Design, noun:

UI/UX Design stands for User Interface and User Experience design, a field that encompasses a user's interaction with digital products like websites and apps. Design elements, layout, and user flows are meticulously planned to … Read the full definition »